What is ChatGPT and is ChatGPT safe?

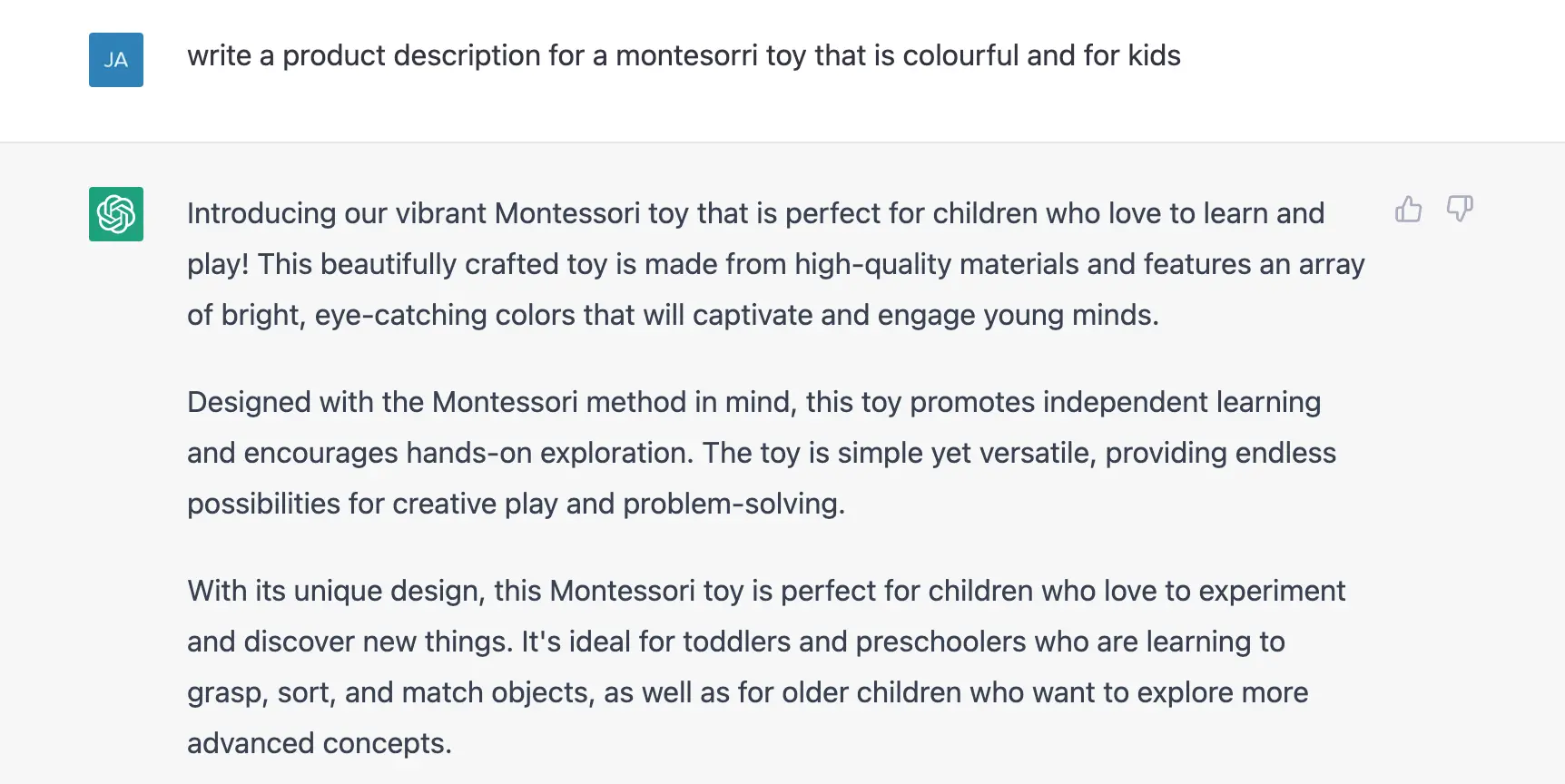

You may have heard of ChatGPT but if you haven’t, ChatGPT is an interactive generative pre-trained transformer built on advanced language models… WOAH, let’s make that a bit easier to understand. It is essentially an artificial intelligence (AI) chatbot that can provide human-like responses to any question you may have. For example, the image below shows the response if I asked ChatGPT to “write a product description for a montessori toy that is colourful and for kids”.

Asking ChatGPT to write me a product description for a Montessori toy.

Asking ChatGPT to write me a product description for a Montessori toy.

All I did was write a question, make sure to mention a few important details and away it went. It actually generated more text but I cut the rest of the answer for simplicity’s sake. Yes, this tool is pretty powerful and could be used to automate a lot of menial tasks. It is no surprise that since its initial release on November 30, 2022 that it had over 1 million users after 5 days and now has over 100 million users to date. However, the tool has limitations and biases that need to be understood by the end user. It is important to quickly discuss who developed ChatGPT to understand its origins in a nutshell.

Who developed ChatGPT?

ChatGPT was developed by the company OpenAI who was co-founded in 2015 by notable names such as Elon Musk, Peter Thiel, Sam Altman and others. The co-founders pledged $1 billion to fund this project that aimed to provide “positive human impact” through AI. It is important to note that OpenAI was originally established as an open-source and non-profit organisation, however, these statements are no longer true.

As a side note, open-source means that the code is readily available to the public and can be modified and redistributed. This promotes community development and allows developers to collaborate in making software from non-proprietary tools. For example, Microsoft Windows is not an open source operating system, but the Linux operating system is. Another example is Microsoft Office (e.g. Word, Publisher, Powerpoint) is Microsoft’s proprietary software that costs money, however, Libreoffice is a free open-source alternative that is funded by community donations.

In 2018, Elon Musk cut ties with the company supposedly due to conflict of interest with his company Tesla and their developments of AI for self-driving cars. However, Elon Musk did show frustration with OpenAI’s direction of becoming closed-source and having a for-profit arm. OpenAI is making big strides in the AI space but have ignited some frustration behind their restructured business model.

Tweet from Elon Musk 4 years after his departure from OpenAI.

Tweet from Elon Musk 4 years after his departure from OpenAI.

How does ChatGPT work?

ChatGPT was trained on a supercomputer that consisted of 285,000 CPU cores, a whopping 10,000 GPU’s and 400 gigabits per second of network connectivity. This network speed would knock the socks off your NBN or ADSL2 that you have at home. By using such a powerful computer, ChatGPT was trained on 570GB of information, approximately 300 billion words, from sources such as books, Wikipedia and other written content on the internet. This is what we all wish was stored in our heads when exam time came around.

What is confusing to most people is how it responds in a human-like way. ChatGPT was trained on information written by humans which included conversations. OpenAI designed the system to maximise the similarity between the output it provides and the information it was trained on. This is why the output is structured in human-like sentences. However, this also means ChatGPT’s outputs can be inaccurate, misleading or even untruthful. In simple terms, ChatGPT will take the input you provide, search its massive corpus of text and form a human-like sentence.

What are the limitations of ChatGPT?

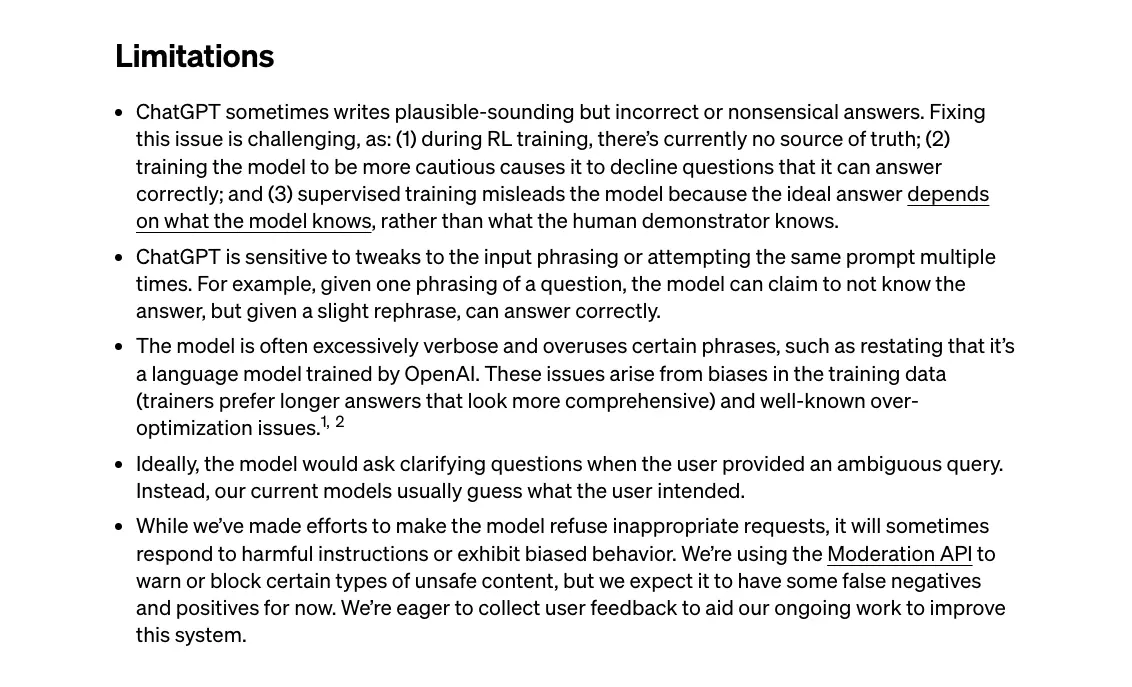

It is important to understand the limitations for ChatGPT, especially since it has become such a popular tool. The image below is sourced directly from OpenAI’s website in a blog article written about ChatGPT.

List of several limitations of ChatGPT provided by OpenAI.

List of several limitations of ChatGPT provided by OpenAI.

We humans are not perfect and this directly affects how perfect ChatGPT performs. The fact is, ChatGPT was trained on data produced by humans which therefore contains potential biases, harmful instructions and limited world knowledge. OpenAI states openly in their FAQs that ChatGPT has limited knowledge of world events beyond 2021. A lot can change in a day - let alone 2 entire years.

The first bullet point makes it clear that even if answers sound plausible, they can also be incorrect and nonsensical. The third dot-point states that ChatGPT is often “excessively verbose” which is an issue regarding a bias in trainers preferring longer answers that look more comprehensive. Simply, it can often value quantity very high in contrast to quality. More words don’t necessarily mean a better answer in the real world.

ChatGPT also lacks context. As mentioned in the fourth bullet point, it would ideally ask clarifying questions to better understand but instead it attempts to “guess” an answer. Unlike a human, if you were to ask me what I thought of your new car (I couldn’t see it), I would usually request to see it for clarification. If I didn’t care to find clarification, I would just say “It looks fast, safe and awesome!”. In a similar way, ChatGPT doesn’t look to further build its context and instead produces answers based on your literal question and its first interpretation - this may differ to your own interpretation.

Is ChatGPT safe to use?

As long as the user understands the abilities and limitations of the tool, ChatGPT is safe and helpful to use. ChatGPT provides answers that can help with brainstorming new ideas, learning about new topics and completing basic tasks. I personally can see it as a tool that can help assist creatives and academics with tedious and boring tasks. From this perspective, it is very exciting to see what the future may hold in the next iterations of ChatGPT.

It is worth mentioning that if you ask ChatGPT the same exact question twice, its answer may vary but the core of its response will remain the same. Then remember more than 100 million people have access to ChatGPT asking potentially similar questions to you. It is then your decision if you think directly using ChatGPT’s answers will be sufficient for your dealings with people, assignments or any other task for the future.

Summary

ChatGPT is a pretty cool technology that will hopefully continue to provide “positive human impact” as opposed to unsafe and harmful consequences. So far, it has shown its potential for improving work tasks and inspiring deeper thought. Its burst of popularity is unprecedented and with very good reason. However, just like talking to another human, users need to understand that ChatGPT may not provide the definitive truth and is not a sentient being that is above us… at least for now! It is built on advanced language models derived from datasets created by humans for humans.

ChatGPT inherits our greatness and our badness all in one. It can provide accurate facts, summarise complex topics and even rewrite paragraphs for you. However, it will not be sensitive to your feelings, may provide hurtful or harmful answers and could provide misleading information. This is why I believe it would be a better assistive tool rather than a complete replacement for human actions. It is safe to use as long as each person remains fully aware that it is not always the source of truth and won’t solve every problem.